Build AI Intuition

Don't just prompt. Learn how to direct thinking machines.

Note on bootcamps: I’m sending out details soon for the first dates of my AI 301 bootcamps. These are for subscribers only so I can manage the audience (it’s free anyhow). They will start after next week since I’m traveling for work. Thanks for reading and I’m looking forward to running Claude Code with this community!

Microsoft Word cannot take your job. But AI can.

We can’t learn AI the way we learned Word, as software with menus and buttons. AI is an autonomous machine that thinks. Learning AI means learning how to direct it.

I call that skill AI intuition.

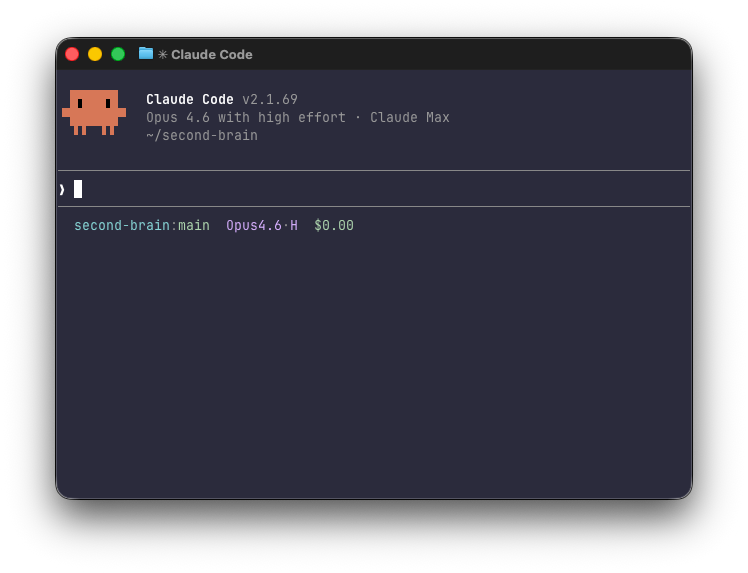

The terminal: raw AI power

A new litigator doesn’t first-chair a trial. A junior M&A lawyer doesn’t run the deal. You build judgment through reps before you’re trusted with the whole thing.

AI intuition works the same way. You don’t start by orchestrating five agents to draft and research all at once. You start by learning what a single agent can and can’t do, managing down the hallucination risk that keeps lawyers wary of AI.

To build AI intuition, I recommend that lawyers start learning AI in the terminal.

The terminal is the engine room of the computer. It’s a black screen with a text line and a cursor. No menus, no buttons. Just you and the AI.

Yes, you trade away the visual interface of a desktop app or website. But in exchange you see up close the fullness of raw AI power.

It’s like a bucket of Lego bricks where you build whatever you want, versus a model kit to build just one or two things. That $130 Simba looks 100x nicer than anything I build on my own. But it’s expensive, and I may just build it once and never touch it again. General purpose vs single purpose.

AI in the terminal (aka command-line interface) offers the richest feature set directly at your fingertips: it codes, writes custom skills, personalizes its style to yours, searches the web, edits files on your computer and plugs into a vast software ecosystem (Google Workspace, anyone).

Other AI interfaces can offer a lot of these, but they don’t offer all of them all at once. They often gate features behind UI that’s painfully manual.

Note: Yes, this is basic for engineers. But we’re lawyers, experts in a different domain, and we’re experimenting with new skills to grow with the times.

A bucket of Legos or a model-kit

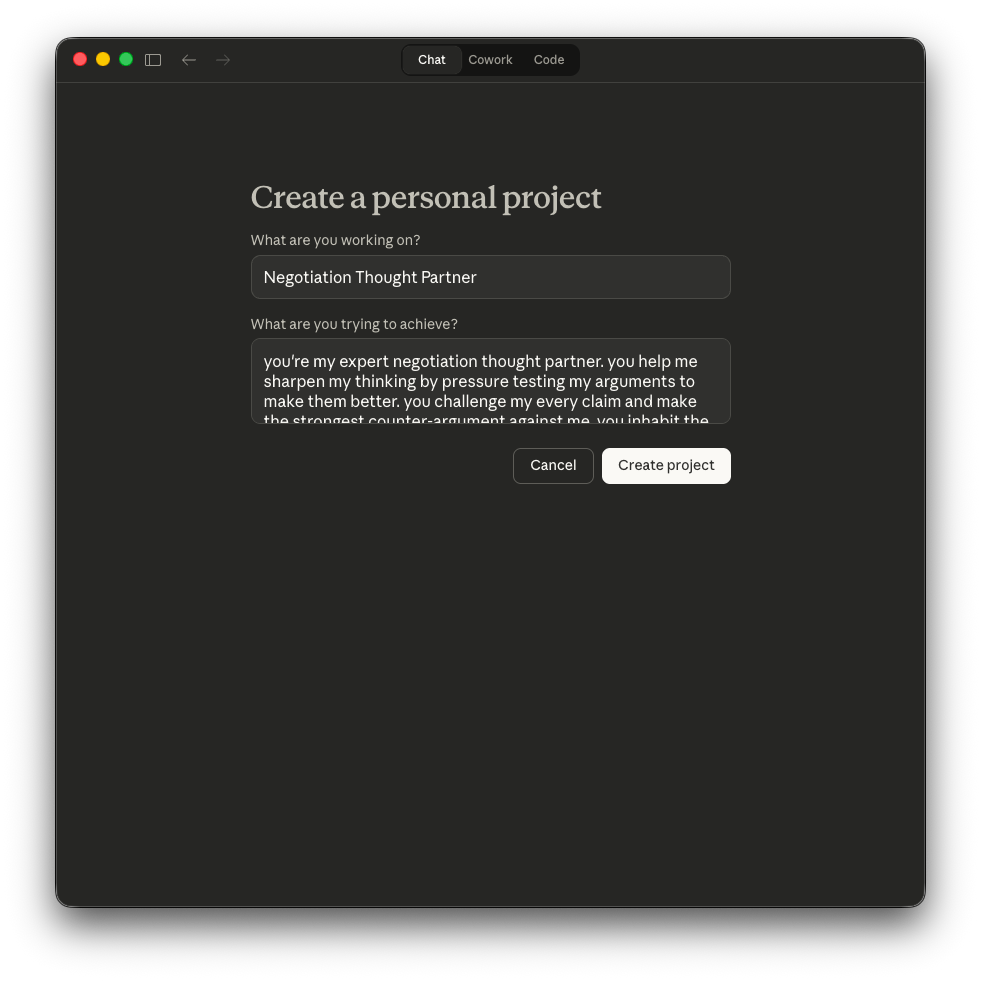

Imagine I want to create an AI sparring partner to help me negotiate better. I want it to attack my arguments to make them stronger.

In Claude Code, this single text prompt will:

brainstorm other ideas with me to make it even better

research the internet for negotiation frameworks

make me a Google Doc summary

write down its instructions so I can improve it over time.

I wrote this out in about 30 seconds:

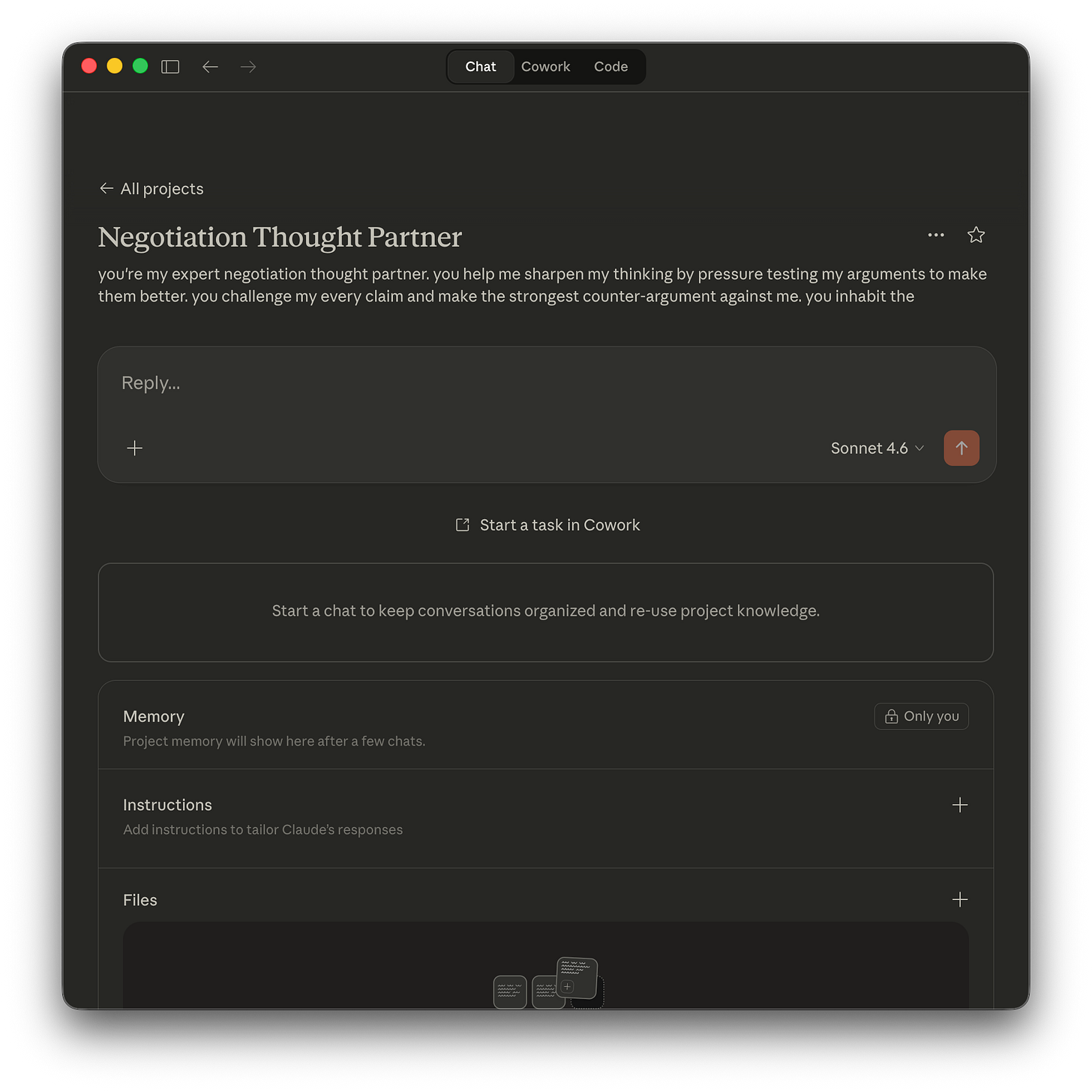

Compare that to Claude the vanilla chatbot where I have to create a Project in order for these instructions to persist. Same company, different interface. I manually click around, fill in the basic instructions. The next screen gives me a textbox to search the web and make a Google Doc. But it takes lots of clicking around, navigating the curated experience Anthropic set up for me.

Is it terrible? Of course not! I’m not doing this to pick on apps. I use apps all the time, and they do have huge advantages over a bare terminal.

My point, however, is that the terminal will always be faster and more powerful. You can get a lot more done in it.

In exchange, though, the learning curve is real. Yet it’s that learning curve that sets you up best to build AI intuition and one day orchestrate AI agents.

For example, I can spin up three agents in a single command, directing them toward a common task. It’s trivially easy and fast.

Polished AI products make trade-offs to serve up a friendlier experience. That’s a reasonable choice for most users. But for building intuition, the friction is the teacher.

Once you have the intuition…

Okay, but what’s the end goal? What differences are there between a lawyer with AI intuition and one without?

Imagine a lawyer is reviewing a product feature that touches on privacy laws in the U.S., France and Brazil. Three regulatory regimes, overlapping but distinct.

The lawyer without AI intuition may start a new chat thread, dump in the product requirements document along with three statutory texts and secondary sources. That would likely give you a jumbled response, and the hallucination risk is real.

How does a lawyer with AI intuition handle this?

Step one: run three parallel agents (one per jurisdiction) each loaded with only that jurisdiction’s statutory text. Each agent maps the obligations that apply to the feature. We get three independent outputs that haven’t cross-contaminated. Better yet, since these are standalone clean analyses, we can layer in case law and those regulators’ latest enforcement actions, including penalties.

Step two: pull the three outputs into a fourth new agent and ask it to build a comparison matrix. Where do the regimes align, where do they conflict and where does one regime have requirements the others don’t? The agent does this well because it’s working from distilled outputs, not raw statutory text in multiple languages.

Step three: take the conflicts and run each one back through a jurisdiction-specific agent to pressure-test. “The France output says explicit consent is required. The U.S. output says notice and opt-out is sufficient. Confirm the France position and cite the specific CNIL enforcement action. Then cite the U.S. state court case supporting that position.”

We do this because the synthesis step is where hallucination risk spikes. The agent is pattern-matching across regimes and may have smoothed over a real distinction to make the matrix clean.

Each jurisdiction stayed isolated until we chose to combine them. When we combined them we checked the seams.

Staying power

Tools change every quarter. The way people built agents last year is already outdated. That knowledge goes stale. The instinct for splitting a problem apart, keeping the pieces clean and checking the seams when you put them back together: that compounds.

That’s AI intuition. I’m building a bootcamp to teach it. Lawyers in the terminal, building the instinct from scratch.

Subscribe to get in when it opens.