Teach Your Lawyer's AI to Think Like You

You already teach new counsel how you think. Help their machine read it too.

Training new lawyers is a drag.

You hire a new outside counsel or junior lawyer. You hand them years of examples. Precedents you like, formats you don’t. Then you meet with them to explain what “good” looks like.

And their work may still be off the mark.

That calibration lives in emails and tribal knowledge nobody can search. It takes months, even years, to nail down. Then they leave and you start over again.

What if it took thirty seconds?

Your legal judgment, in a text file

We carried business cards for a century because both sides gave and both sides gained: our identity, pocket-sized.

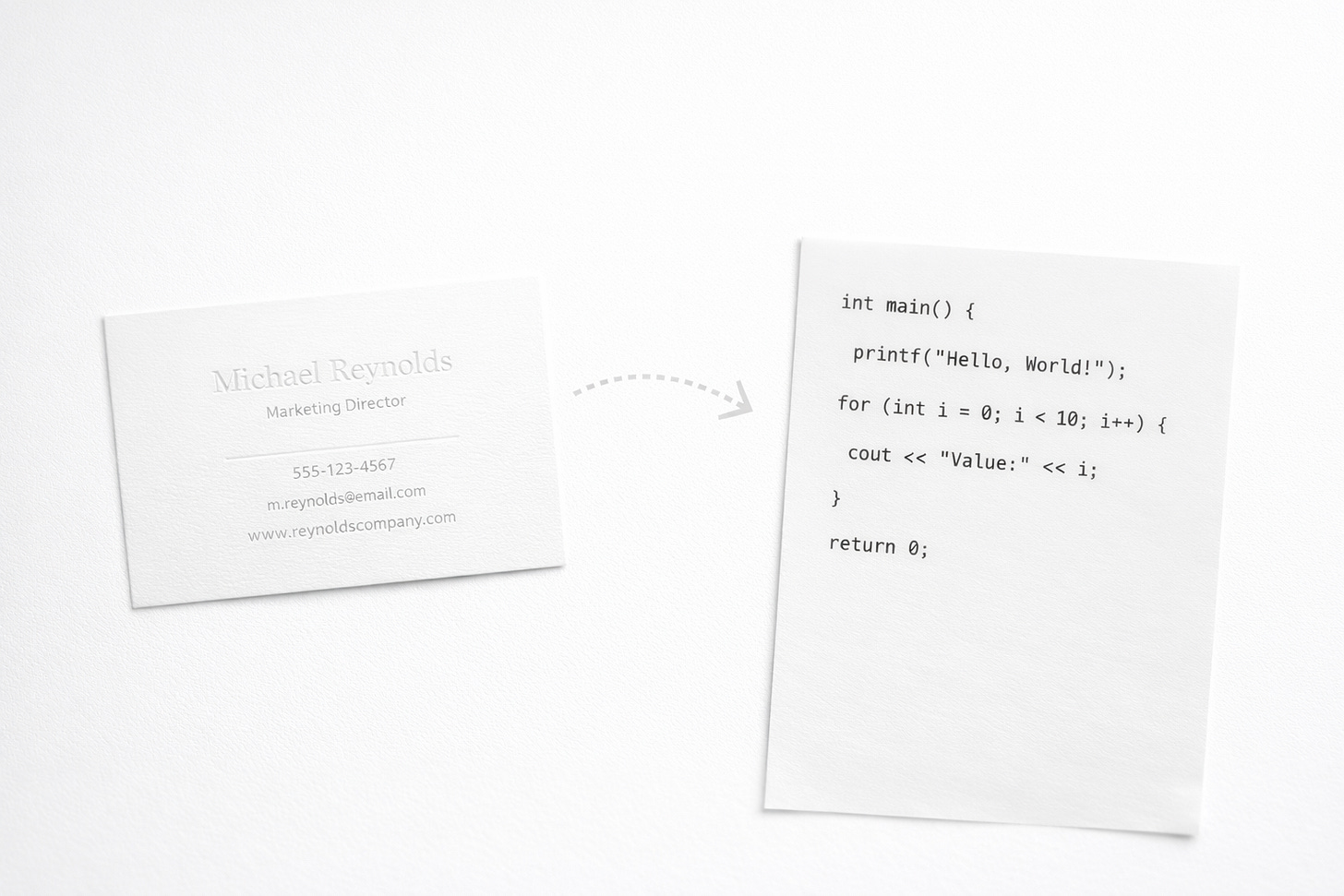

Here’s a radical thought experiment for the AI era. Let’s exchange a file that doesn’t tell counsel who we are, but how we think.

First, some AI context. When we load up an AI agent, it always first reads a plain-text instructions file called the system prompt. Think of it as standing orders that govern the AI’s priorities, reasoning style and defaults. OpenAI calls theirs agent.md, Anthropic uses claude.md, but the idea is the same across platforms.

What if we wrote a file — call it the mindset.md — so your new lawyer and their AI have a reference of legal judgment to benchmark their work against?

Imagine a junior lawyer drafting her first legal analysis. It’s academic, maybe too prescriptive. She has her AI run it against my mindset.md before she ships to me.

She’s pressure testing her draft against my mental model for how I think and decide. AI can even make it a training session by giving constructive feedback and explaining why.

“Your advice gave a single legal recommendation, but Eric prefers an options space of three alternatives, weighing the pros and cons of each. This way we can give product teams breathing room to make smart choices while balancing legal risk.”

Rethinking how we work

This sound out there. But we already do this every day; we may just not call it that.

Today, we tailor our message for the audience, based on what we think we know of them.

Today, we give counsel examples of advice we like or don’t like. We ask, but more often hint and imply: please follow this, don’t reinvent the wheel.

I know people who make AI “profiles” of specific executives and run even simple emails against that profile to make sure they land their points.

The mindset.md is just an extension of that, encoding my judgment so a machine can read it too. But as a professional practice.

Here’s an excerpt from mine:

TL;DR upfront: help me understand the entire question in a paragraph. Then suggest next steps, and only then frame your analysis.

Options space: analyze multiple alternatives, pressure-test them against each other.

Show me your line of reasoning: always give citations so I can drill deeper into your analysis if I choose to.

No bare penalty recitations. “4% of global turnover” tells me nothing I can’t google. Tell me what a rule means for this specific product decision. What is the specific impact and the likelihood of enforcement?

Regulatory color: has the regulator spoken publicly? Brought enforcement actions? I want to know how they actually behave, not just the statute.

Competitive benchmarks: what are peers doing? Earnings calls, industry blogs, press reviews. Don’t analyze my product in a vacuum.

Show me you understand my product and tech: have you used my product? Show me screenshots, mockups, photos of the thing I’m building, not just the legal framework around it.

Changing professional habits is hard. But it’s just one example of how lawyers can adapt to the new world of knowledge work.

Machine-readable judgment

Every time you switch counsel, you lose months of calibration. New firm, same onboarding grind, all those preferences you spent a year teaching the last team wiped clean.

A mindset.md doesn’t just speed up onboarding. It’s portable, moving with you across firms, across matters, across platforms, so the calibration follows you instead of dying with the relationship.

The commercial internet spent two decades blocking bots and is now rebuilding for AI agents. Legal work is heading for the same shift, and the lawyers who make their judgment machine-readable will have a head start.

I wrote about the plumbing problem, where AI nails the thinking but breaks on the connections between tools. A mindset.md is one small piece of plumbing that actually works, a machine-readable file that carries your professional judgment into every AI interaction your counsel runs.

The question is whether you give AI something to work with or let it guess.1 You don’t even need to write the file yourself.2

What would yours say?

Think about the last time a new counsel got you wrong. Not the law, but you: the framing, the risk call, the level of detail. If you could write it down in one page, what would it say?

The lawyers who’ve done that work will plug in and go. The rest will calibrate with memos and phone calls, one matter at a time.

Subscribers: bootcamp details are in your email header.

What’s fair is fair. Outside counsel could send one back to me. Call it a firm.md, with guidance for clients on whether in-house gave enough context, made clear asks and set explicit timing.

For the record, this is still a thought experiment. I haven’t tried it with anyone yet. If there are lawyers willing to experiment, reach out.

Point your AI at work product you already have: sent emails to counsel, redline comments, feedback on memos. Ask it to find the patterns. “Read these fifty emails and tell me how I give feedback. What do I ask for that I never get on the first draft?”

I did the exercise. The term “options space” kept surfacing, because I was always asking for a baseline and alternatives to compare against. The AI found a preference so ingrained I’d never thought to articulate it.

Obsessed with this. Think about how this can impact switching costs for GCs. Rapid acceleration from an old school firm and one thay can ingest a mindset.md and hit the ground running. Even more powerful for a firm that can ingest client's feedback seamlessly and build its own mindset.md that is continually improved through each interaction. I am thinking we could even build some standard mindset files for new clients to choose from at onboarding, like a jump off point.